Learn how to master benchmarking with competitors in AI search. This guide provides actionable strategies for analyzing your rivals and boosting visibility.

When we talk about benchmarking against competitors, we're really talking about a strategic analysis of your performance versus theirs, using concrete, measurable data. But let's be clear: in today's AI-driven search world, this goes way beyond just tracking a list of keyword rankings. It's about deeply understanding your visibility, the sentiment around your brand, and the accuracy of information about you within AI-generated answers on platforms like Google's AI Overviews and ChatGPT.

This isn't just a "nice-to-have" exercise. It's a fundamental part of protecting and growing your market share.

Why Traditional SEO Benchmarking Is Failing

Relying on old-school SERP tracking today is like using a paper map to navigate a city that rebuilds itself every week. The search environment has fundamentally changed, and if your competitor benchmarking strategy hasn't evolved with it, you're operating with a massive blind spot.

The core problem is simple. Your legacy tools are probably great at telling you where you rank for "B2B SaaS solutions," but they are completely silent on whether an AI Overview is recommending your top three competitors instead of you. This isn't a minor detail; it's a gaping strategic vulnerability that leaves your brand dangerously exposed.

The AI-Driven Visibility Gap

The search landscape is no longer a simple list of ten blue links. Google still holds a commanding 90.82% global market share, but AI-native tools like ChatGPT and Perplexity are rapidly carving out their own space, now handling up to 10% of total queries.

Think about that. Then consider that AI Overviews already appear in 18% of Google searches, and when they do, they reduce clicks to traditional results by an average of 34.5%. The stakes have never been higher. B2B leaders have to benchmark how their brands perform inside these new AI responses, or they risk losing ground they can't see.

This creates a critical visibility gap where your brand might be functionally invisible to a growing slice of your audience, even if your traditional keyword rankings look strong. Effective benchmarking now requires asking totally different questions:

- How often does our brand get mentioned in AI answers for our most important commercial queries?

- Is the sentiment of those mentions positive, neutral, or are we getting trashed?

- Is the information presented about our products and services even accurate?

Ignoring these questions means you're busy measuring yesterday's battles while your competitors are quietly winning tomorrow's. This is one of the biggest yet most overlooked common SEO mistakes that even experienced teams are making right now.

To truly grasp the shift, it helps to see the old and new approaches side-by-side.

AI Benchmarking vs Traditional SEO Benchmarking

This table shows the critical differences between legacy SEO practices and the modern necessity of AI-centric competitor analysis.

The takeaway is clear: the battlefield has expanded. While traditional SEO metrics still have a place, they no longer provide the full picture needed to compete effectively.

Key Takeaway: The shift from keyword rankings to AI-driven visibility means your current benchmarking is likely incomplete. Without monitoring AI responses, you lack a true understanding of your competitive position and are at risk of ceding market share to rivals who adapt faster.

A proactive, AI-focused approach to benchmarking isn't just an option anymore—it's a requirement for survival. Your goal must be to move from a reactive stance of fixing issues after they appear, to a predictive one where you actively anticipate and shape how AI models perceive and present your brand.

What Winning Looks Like: Defining Your AI Search Metrics

Before you even think about pulling data, you have to be brutally honest about what “winning” actually means in this new AI search landscape. This is where most teams get it wrong—they try to apply old-school SEO metrics to a completely new game, and it just doesn't work.

We have to consciously move past the vanity metrics. Keyword rankings used to be the north star, but now they're just one small star in a massive constellation. When an AI generates an answer, success isn't about being position one; it's about influence, perception, and accuracy. The goal here is to tie your benchmarking directly to what moves the needle for the business.

From Rankings To Representation

It’s time to shift your core question from "Where do we rank?" to "How are we represented?" That small change in language forces a huge change in strategy. It makes you prioritize metrics that truly reflect how AI models perceive your brand's authority and credibility.

These are the metrics that matter now:

- AI Share of Voice (SoV): What percentage of AI-generated answers for your target queries mention your brand versus the competition? A high SoV tells you the AI sees you as a dominant, authoritative voice on the topic.

- Sentiment Analysis: When your brand is mentioned, is the tone positive, neutral, or negative? This is your brand's real-time reputation score in the AI ecosystem.

- Information Accuracy: How well do AI models get the facts right about your company, products, and services? Getting this wrong can kill a deal before it even starts.

- Frequency of Citations: Are AI engines citing your content as a source? This is a massive vote of confidence in your content's quality and reinforces your subject matter expertise.

These KPIs give you a far more honest and actionable picture of your performance than any simple ranking report ever could. They form the bedrock of a modern benchmarking framework.

Connecting Your Metrics To Business Goals

Let’s be realistic—different people in your organization care about different things. The trick is to align these new metrics with specific departmental goals. This is what turns your analysis from a "nice-to-have" report into a strategic decision-making tool.

A CMO, for instance, is probably obsessed with brand perception. They'll want to see Sentiment Analysis and AI Share of Voice for those big, top-of-funnel industry terms. Their entire focus is making sure the brand is seen as a leader.

Meanwhile, a Growth Manager is hunting for leads. They’re going to zero in on the Frequency of Citations for bottom-of-funnel content and the Information Accuracy of product specs in AI answers. This stuff has a direct line to lead quality and conversion rates. To benchmark effectively, it's also smart to know what's competitive in your industry; these Cost Per Lead (CPL) benchmarks can provide a solid frame of reference.

I see this all the time: teams try to track way too much at once. Start with just two or three primary KPIs that tie directly to your most critical business objective. You can always add more later. A focused approach is an impactful one.

Building this measurement plan is non-negotiable. For a deeper dive, using a dedicated search visibility tracker gives you the granular data needed to effectively monitor these metrics over time. When you define what success looks like from the outset, every data point you collect has a purpose, guiding you toward real insights instead of drowning you in noise.

How To Collect Accurate Competitor Data

Let’s be honest: gathering solid competitor data is where most benchmarking plans fall apart. When it comes to the new world of AI search, trying to do this manually isn't just a time-sink; it's a guaranteed way to get incomplete, unreliable information. You can't just casually ask ChatGPT, Perplexity, or Copilot the same questions every day and expect to see the real picture.

To get this right, you need a system. The goal is to build a repeatable process for tracking mentions, spotting content gaps, and keeping a pulse on brand sentiment. Without a consistent, automated stream of data, you’re just guessing, basing your strategy on random snapshots instead of meaningful trends.

Why Automation Is Non-Negotiable

If you're still relying on manual searches across AI platforms, it's time to stop. It's a dead-end road. The results are inconsistent, you can't scale it, and your team's time is far too valuable to be spent on copy-paste tasks that a machine can do better.

Automation is the only way to build a real competitive intelligence engine. It lets you:

- Track Everything, All the Time: Run your monitoring 24/7 across every AI model that matters. You'll catch mentions and shifts that would be impossible to spot manually.

- Build a Baseline: You can’t know if you’re improving if you don’t know where you started. Automation creates that crucial historical record.

- Scale Without the Headaches: Need to add five new competitors or a dozen new keywords? With an automated setup, it's a few clicks, not a few more hours of work for your team.

Setting up this workflow is the first real, practical step in your benchmarking playbook. It’s what turns competitive intelligence from a one-off report into a living, breathing part of your operations. For a deeper dive on this, our guide on what is competitive intelligence explains how this continuous monitoring fuels smarter strategy.

Focus on the Right AI Search Platforms

Not all AI platforms are the same, and your B2B buyers certainly aren't using them all equally. You have to be smart about where you focus your energy.

The growth of these tools has been explosive. ChatGPT now owns an 81% share of the AI chatbot market, fields 2 billion queries a day, and has shot up to become the 4th most visited website on the planet. For B2B companies, this is a wake-up call. While Google still reigns supreme, AI-native platforms are crushing it for informational queries (23%) and generative tasks (64%). You have to be visible where your customers are asking questions.

A dashboard like this one from Attensira is invaluable. It lets you see exactly how your brand visibility and sentiment stack up against the competition, engine by engine.

This side-by-side view is where the insights really pop. You can immediately see where a competitor is cleaning up or where your brand is basically invisible.

You Need Both the "What" and the "Why"

Great data collection isn't just about counting things. You need to blend the quantitative (the hard numbers) with the qualitative (the context behind them). One without the other tells you only half the story.

First, lock down your quantitative data. These are the core metrics for your scorecard:

- Mention Frequency: For your most important queries, how often does your brand pop up compared to your rivals?

- Share of Voice (SoV): Of all the brand mentions in AI-generated answers, what percentage is yours?

- Citation Count: How many times do AI models cite your domain as a source? This is a huge credibility signal.

Then, you dig into the qualitative side to understand what's really going on:

- Sentiment Score: Are the mentions positive, negative, or just neutral? This is mission-critical for protecting your brand's reputation.

- Content Gap Analysis: What topics or customer questions are your competitors owning in AI answers? Where are your blind spots?

- Factual Accuracy: Is the AI getting the facts right about your products and pricing? You'd be surprised how often it doesn't.

To add another layer to your analysis, it's worth exploring broader strategies to analyze competitor websites and traffic. This helps you understand their overall digital footprint, which often informs why they are or aren't showing up in AI search.

Expert Tip: Don't just track your company name. Make sure you’re monitoring key product names, the names of your executives, and even unique, branded feature terms. This gives you a much richer, more complete picture of how your entire brand ecosystem is being portrayed.

By pulling all this together—automation, a focus on the right platforms, and a mix of quantitative and qualitative data—you build a powerful intelligence engine. This gives you the raw material you need to build meaningful scorecards, find those game-changing insights, and start winning in this new AI-driven landscape.

Finding Actionable Insights In The Data

Raw data, no matter how much you collect, is just noise. It’s the analysis that turns those streams of mentions, sentiment scores, and citation counts into real competitive intelligence. Once your data collection is automated, the real work begins: translating that information into a clear strategic picture.

The most effective way I’ve seen teams do this is by creating a Competitor Benchmarking Scorecard. Think of it as your strategic dashboard, showing you exactly where you stand against key rivals in the AI search ecosystem. It’s the perfect tool for cutting through the complexity and getting everyone focused on what actually matters.

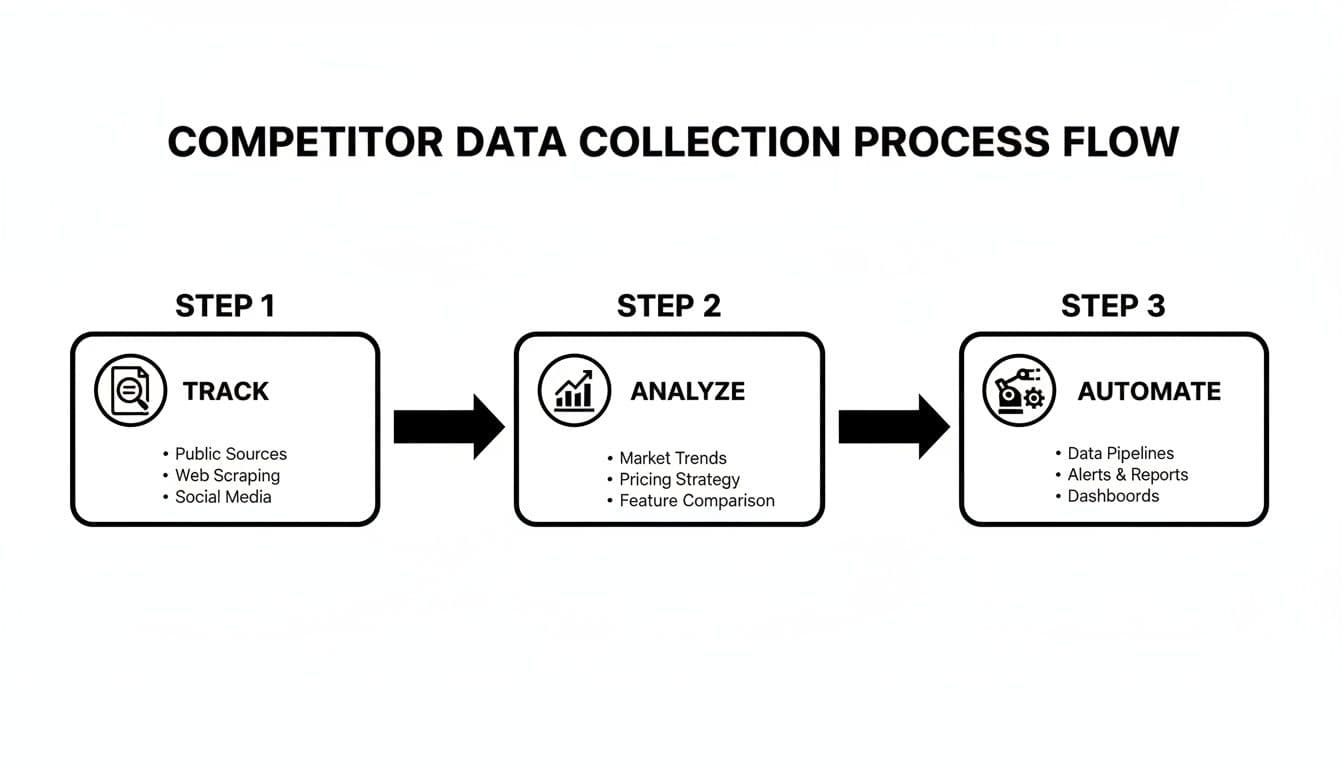

This entire process, from tracking the raw data to automating the insights, is what keeps you ahead of the game. The workflow is pretty straightforward.

This illustrates the core three-stage process—Track, Analyze, and Automate—that forms the backbone of any successful competitive benchmarking program.

Building Your Competitor Scorecard

A great scorecard isn't just a spreadsheet packed with numbers; it's a story about your position in the market. To build a useful one, you need to consolidate the data you’ve gathered into a few core scores that are easy to grasp at a glance.

Start by defining the main pillars of your scorecard. For most B2B teams I work with, these are the most impactful areas to measure:

- Visibility Score: This quantifies how often your brand gets mentioned in AI-generated answers for your target queries compared to competitors. It's a direct measure of your "AI Share of Voice."

- Sentiment Score: This score aggregates the tone of all mentions—positive, negative, and neutral—to give you a real-time pulse on how AI models perceive your brand reputation.

- Content Gap Score: This is a measure of how well your content covers the key topics and questions your competitors currently own in AI search. A high score here means you’ve got significant ground to make up.

By boiling down complex data into these three scores, you create a powerful framework for comparison. Suddenly, vague ideas like "brand presence" become concrete, measurable, and—most importantly—actionable.

A well-structured scorecard helps teams quickly see where they stand. Here is a practical template demonstrating how to score and compare your brand against competitors across these crucial AI visibility metrics.

Example AI Competitor Benchmarking Scorecard

This table immediately highlights both your strengths and the opportunities your competitors are exploiting, giving you a clear starting point for your next strategic moves.

A Real-World SaaS Scenario

Let's put this into practice. Imagine a B2B SaaS company that sells project management software. They've built a scorecard to benchmark against their two main rivals. After just a month, their scorecard surfaces a critical insight.

While their own Visibility Score is decent for general queries like "best project management tools," they discover a massive gap. It turns out Competitor A has a Visibility Score that's 3x higher for any query related to "software integrations" or "API connectivity."

This isn't just an interesting data point; it's a flashing red light. The data shows that AI models have learned to associate Competitor A with a key decision-making factor for their target audience. This is exactly the kind of actionable insight a good scorecard is designed to uncover.

The goal of a scorecard isn't just to see who's winning. It’s to understand why they are winning on specific fronts so you can build a precise, targeted strategy to counter them.

This discovery immediately informs their next content push. Instead of creating more generic top-of-funnel articles, the marketing team now has a clear mandate: develop a series of in-depth tutorials, case studies, and guides focused on their software's integration capabilities. For a deeper look at this type of analysis, check out our guide on effective AI search monitoring.

Interpreting Trends And Pinpointing Opportunities

Your scorecard becomes truly powerful when you track it over time. Following these scores month-over-month lets you spot trends and see the direct impact of your strategic moves. Did that content push on integrations actually move the needle on your Visibility Score? Is that new customer success initiative improving your Sentiment Score?

This is especially critical right now. For the first time in a decade, Google's market share has dipped below 90%, as Bing gains ground with its AI features. With AI Overviews appearing in 18% of searches and reducing clicks by 34.5%, the risk of becoming invisible has never been higher.

By analyzing trends, you can see where competitors are taking advantage of these shifts and where you need to fortify your presence to combat the rise of zero-click searches. Ultimately, the data tells you where to put your resources, helping you move from a "spray and pray" content strategy to a surgical approach where every action is informed by a clear understanding of the competitive landscape.

Turning Your Analysis Into A Winning Strategy

All the data in the world doesn't mean a thing if it just sits in a dashboard. The whole reason we benchmark is to find an edge—to figure out where we can act smarter and faster than the competition. You've got your scorecards and you know where the gaps are. Now, it's time to build a system that turns those insights into a real execution plan.

This is where the rubber meets the road. It’s about moving from observation to action and creating a rhythm of continuous improvement that actually sticks.

Prioritizing With The Impact vs. Effort Matrix

After a deep dive, you’ll probably have a laundry list of potential actions. It's easy to get overwhelmed. The trick is to sidestep the chaos and focus on the moves that will actually make a difference. The best tool I've found for this is a simple Impact vs. Effort Matrix.

This framework forces you to evaluate every potential action against two critical questions:

- Impact: How much will this realistically improve our core metrics, like Visibility Score or Sentiment? How will it help us hit our bigger business goals?

- Effort: What will this actually cost us in terms of time, money, and people?

When you plot your ideas on this grid, the path forward becomes crystal clear.

Quick Wins (High Impact, Low Effort): These are your no-brainers. Jump on them immediately. For example, maybe you found that AI models are quoting the wrong price for your top-tier plan. That's a low-effort fix with a huge impact on sales conversations.

Major Projects (High Impact, High Effort): Think of these as your big strategic bets. If a competitor completely owns the narrative around a key feature, you might need a full-blown content campaign to win it back. It’s a big lift, but the payoff is worth it.

Fill-Ins (Low Impact, Low Effort): These are the nice-to-haves. Hand them off to a junior team member or tackle them when you have a spare afternoon.

Reconsider (Low Impact, High Effort): These are resource traps. Acknowledge them, and then make the conscious choice to shelve them.

This exercise isn't just about sorting tasks; it's about ending debates and getting everyone aligned on what matters right now.

Establishing A Repeatable Reporting Cadence

Benchmarking isn't a "set it and forget it" project. It's a muscle you have to keep working. The key to making this a core part of your marketing motion is to build a repeatable cadence for review and action. For most B2B teams I’ve worked with, a monthly AI visibility review hits the sweet spot.

This frequency keeps the insights fresh and allows you to be nimble enough to react to changes in the competitive landscape. A consistent cycle turns benchmarking with competitors from a reactive fire drill into a proactive, strategic part of your job.

Keep your monthly review meeting tight and focused on action. The goal isn't to get lost in spreadsheet rows; it's to spot trends, lock in priorities, and assign owners.

Here’s a simple agenda that works:

- Scorecard Review (10 mins): What changed month-over-month? Quickly hit the highlights for your core scores (Visibility, Sentiment, Content Gap) versus your top two rivals.

- Key Insights & Wins (10 mins): Call out 2-3 major findings. Did a competitor's new campaign give them a bump? Did that article we published actually move the needle on sentiment?

- Priority Action Items (15 mins): Pull up the Impact vs. Effort matrix. Agree on the top 1-2 "Quick Wins" and maybe one "Major Project" to kick off this month. Assign names and dates.

- Open Questions & Roadblocks (5 mins): What's getting in the way? Solve problems in real-time.

This structure ensures your meetings produce outcomes, not just more meetings. It’s this consistent rhythm that will ultimately improve your AI search visibility over time.

Communicating Progress To Leadership

Finally, you have to sell the value of this work upstairs. Leadership doesn't need to see every data point, but they do need to understand the story. I've found a simple, one-page report is the most effective way to do this.

Your summary should nail three key questions:

- Where do we stand? Give them the 30,000-foot view of your scorecard and where you sit in the competitive pecking order.

- What have we done? Briefly list the key actions your team took based on last month's analysis.

- What are we doing next? State your top priorities for the upcoming month and connect them directly to business goals.

When you frame your findings this way, you shift the conversation from tactical weeds to strategic impact. You’re showing that you're making data-driven decisions that protect and grow the brand's position. This is how you turn a simple monitoring task into a powerful engine for strategic growth.

Frequently Asked Questions

Even with a solid playbook, you're bound to have questions as you get your competitor benchmarking program off the ground. Let's walk through some of the most common ones we hear from B2B teams who are just starting to analyze their performance in AI-driven search.

How Often Should I Run These Benchmarks?

The right cadence really depends on how fast your market moves. For most B2B companies, a full scorecard review every month is the sweet spot. It's frequent enough to catch important trends and see how competitors are shifting their strategy, but not so often that you get bogged down in the data.

That said, some metrics need a closer eye.

- Sentiment analysis is something you'll want to watch in near real-time, especially if you're in the middle of a product launch or a big campaign. You have to be ready to jump on negative feedback immediately.

- Visibility for critical, bottom-of-funnel keywords—the ones that drive revenue—probably deserves a quick check-in every week. You can't afford to lose ground on those.

Think of it as a rhythm. You do the deep-dive analysis monthly, but you keep a weekly pulse check on the KPIs that matter most to the business.

What’s The Difference Between Benchmarking And Competitor Analysis?

This is a fantastic question because people often use these terms interchangeably, but they're not the same thing. The simplest way to think about it is that competitor analysis is broad and often qualitative, while benchmarking is specific and quantitative.

Competitor analysis is the high-level detective work. You're looking at a competitor's entire strategy—their website, social media, pricing, and sales tactics—to get a general sense of their strengths and weaknesses.

Benchmarking, on the other hand, is the surgical process of measuring your performance against theirs using very specific metrics. You're not just noting they have a strong blog; you're measuring your AI Share of Voice against theirs for a specific set of topics and seeing who comes out on top.

Benchmarking provides the hard data to prove or disprove what you've observed in your broader competitor analysis. One tells you what they're doing, and the other tells you how well it's actually working compared to you.

Which Competitors Should I Actually Focus On?

It's really tempting to try and track every single company in your space, but that's a surefire way to get overwhelmed with useless data. A much smarter approach is to segment your competitors into two key groups for your benchmarking.

Direct Competitors: These are the companies you go head-to-head with every day. They offer a similar product to the same audience. To keep your analysis focused and actionable, you should benchmark against your top 2-3 direct competitors.

Aspirational or Indirect Competitors: This group includes market leaders in a related field or companies solving the same customer problem with a totally different approach. It’s a good idea to keep tabs on 1-2 of these players. They can be a great source of inspiration for new strategies you might otherwise miss.

By narrowing your focus, you make sure the insights you gather are sharp, relevant, and not just noise from the fringes of your market.

What Tools Do I Need for This?

Let's be honest: trying to collect this data manually just isn't going to work, especially when you're dealing with AI search. To get reliable and consistent data, you need the right tools for the job.

A platform built specifically for AI monitoring is non-negotiable. It's the only way to accurately track things like Share of Voice and sentiment as they appear in AI-generated answers. Without it, you're flying blind. True benchmarking with competitors requires a solution that automates the data collection so your team can spend their time on what really matters: analysis and strategy.

Ready to stop guessing and start winning in AI search? Attensira provides the automated dashboards and actionable insights you need to build a world-class competitor benchmarking program. See exactly where you stand and uncover your next strategic move. Get started with Attensira today.